· articles · 3 min read

By Arti BandiStop Broadcom DevTest After 500 Requests Using Stateful Rules

Compare how Broadcom DevTest and Beeceptor simulate API request limits. Beeceptor uses declarative counters and stateful rules without scripting or runtime assertions.

One of the interesting discussions on the Broadcom DevTest community revolved around a deceptively simple requirement: stop a virtual service after it receives 500 API calls.

This utterly basic testing scenario. Mnay QA and SDETs often need this behavior when simulating:

- API consumption quotas

- sandbox usage limits

- trial account exhaustion

- rate-limited integrations

- one-time token validation flows

However, the implementation approach revealed in the discussion is a deeper architectural difference between traditional service virtualization platforms like DevTest and modern declarative mock servers like Beeceptor. We will dive deeper into both:

Broadcom DevTest - Rate Limiting

In Broadcom DevTest, the proposed solution relied on some scripting inside the runtime of virtual service. The official implementation required a scripted assertion directly to the HTTP/S Listen Step and used SharedModelMap to persist a global counter across requests. The engineer suggested storing the counter as a thread-safe AtomicInteger object because multiple concurrent requests could otherwise corrupt the count.

With thisEvery incoming request:

- retrieved the shared counter

- incremented it programmatically

- checked whether the threshold exceeded 500

- conditionally stopped serving responses

After crossing the limit, the virtual service effectively stopped responding to new requests. The service itself still remained deployed and active inside DevTest, but the assertion prevented downstream processing. Resetting the counter required either restarting the virtual service or manually clearing shared state.

The discussion also suggested the script can further do:

- trigger VS shutdown APIs to save on resources.

- send custom HTTP error responses like 429

- dynamically change service behavior after threshold exhaustion

The solution was entirely through embedded Groovy/Java scripting rather than declarative service configuration.

Beeceptor - Rate Limiting

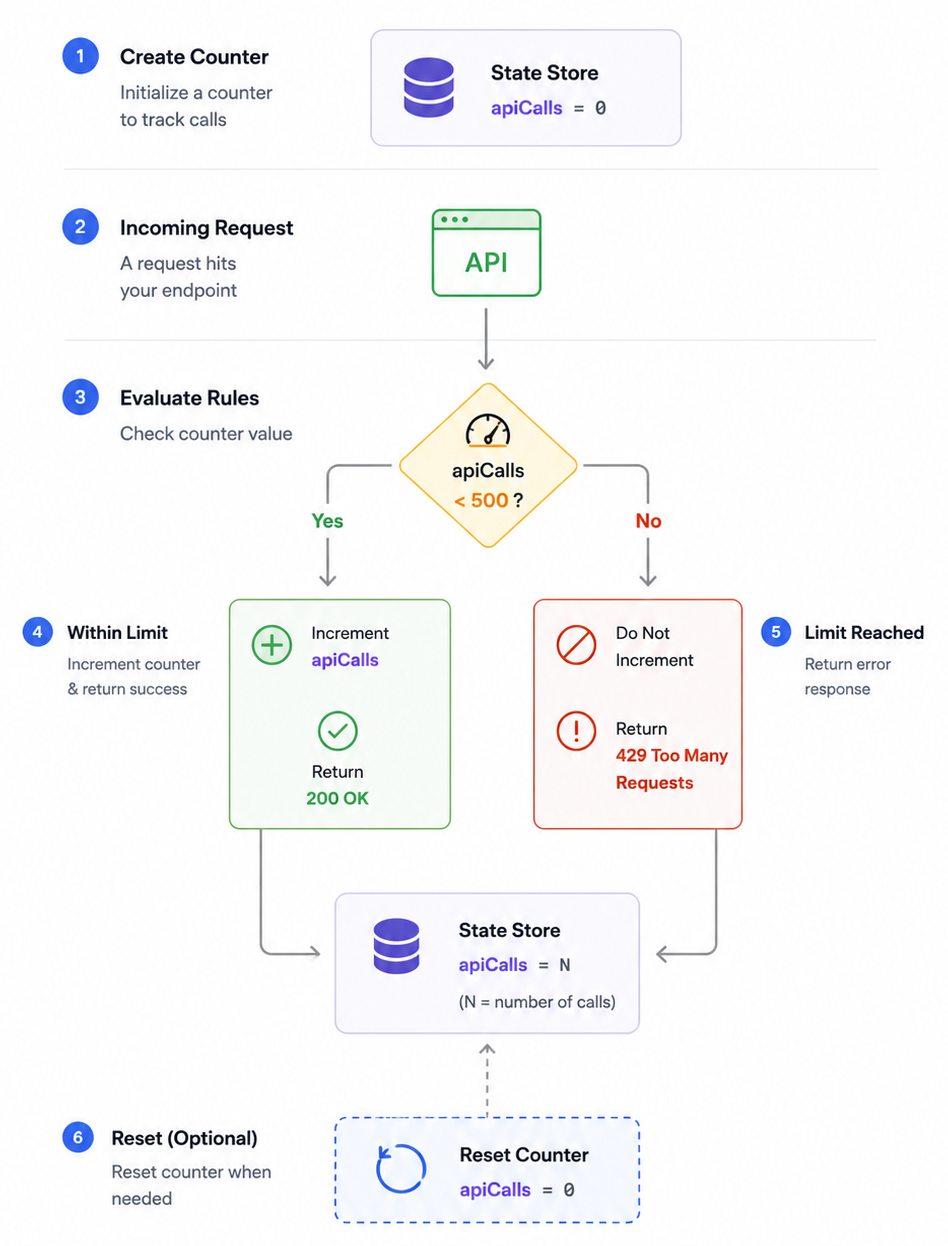

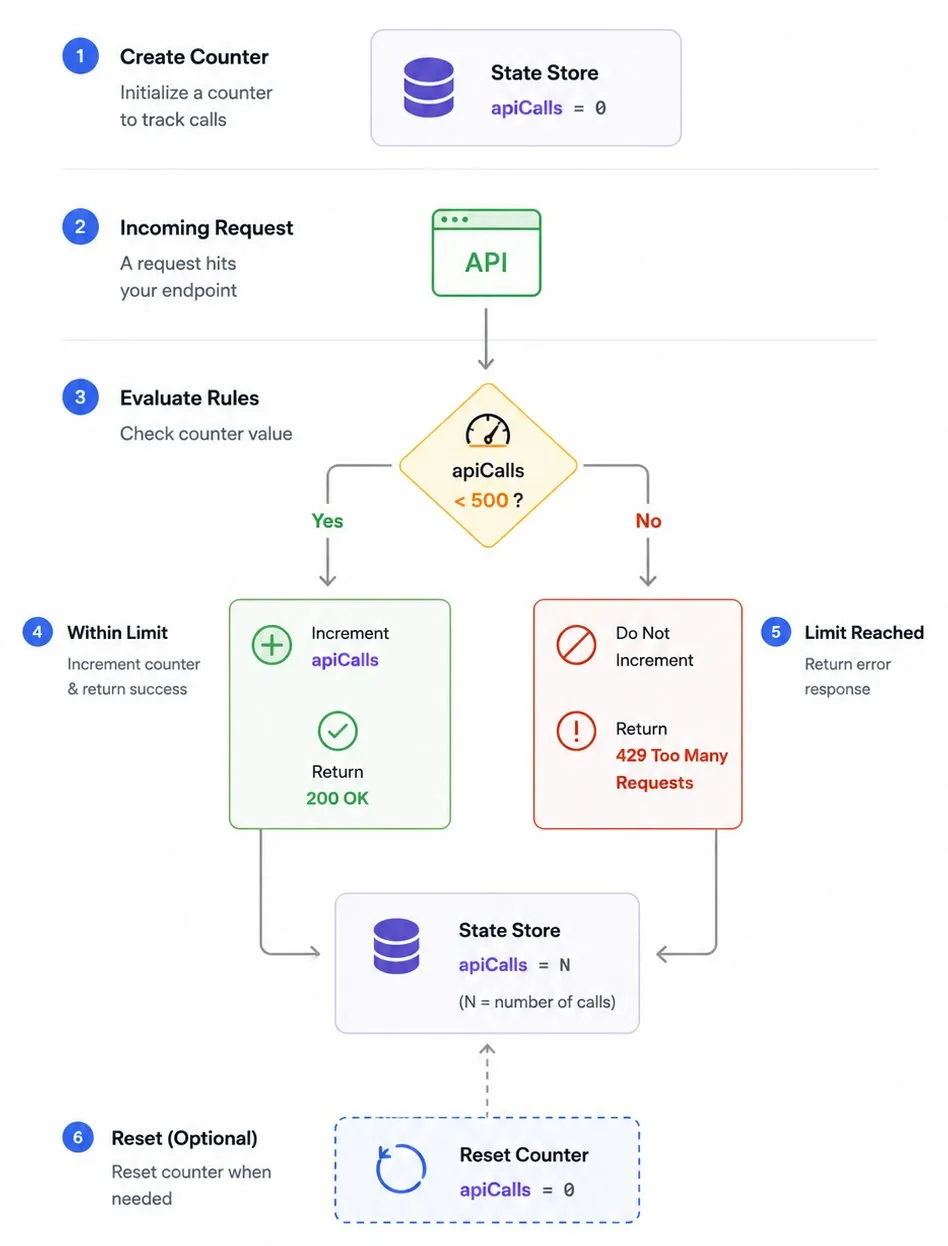

In Beeceptor, the same use case is modeled declaratively.

Beeceptor treats counters and stateful behaviors as native concepts inside the rules engine. Instead of embedding Groovy or Java assertions, the request limit becomes part of the rule configuration itself.

A typical implementation looks like this:

Rule 1:

- Match requests when

counter('apiCalls') < 500 - Increment the counter

- Return normal mocked response

Rule 2:

- Match requests when

counter('apiCalls') >= 500 - Return

429 Too Many Requests

The counter persists automatically as state managed by the mock server. No shared memory management. No synchronization primitives. No runtime scripting.

More importantly, the rule itself clearly communicates the behavior.

The mock server becomes self-documenting:

- first 500 calls succeed

- all later calls fail

Beeceptor extends this model further using state variables, request filters, and declarative matching rules. Teams can simulate workflows like:

- expiring OAuth tokens after N requests

- quota-based API plans

- retry-until-success flows

- staged onboarding APIs

- limited-use coupon systems

without building procedural orchestration layers.

You are seeing a key shift in modern API virtualization. The traditional platforms expose programmable runtimes obsolete stack, that make it difficult to hire skilled people. Beeceptor exposes declarative behaviors that even a junior engineer, or AI can write for you.

The difference matters because stateful API simulation should not require writing synchronization code just to count requests.